Fairwinds Debuts Open Source Kubernetes Configuration Tool

Fairwinds today announced the availability of open source Polaris software that can be employed to optimize the configuration of Kubernetes clusters.

Available for download or as a service hosted by Fairwinds, Polaris was developed to make it easier to provide a managed service around Kubernetes clusters. Polaris runs a series of checks based on best practices defined by Fairwinds to ensure a Kubernetes cluster is properly and optimally configured. Kubernetes may be one of the most advanced IT platforms ever developed, but all the knobs and levers available to users makes it easy for Kubernetes clusters to be misconfigured.

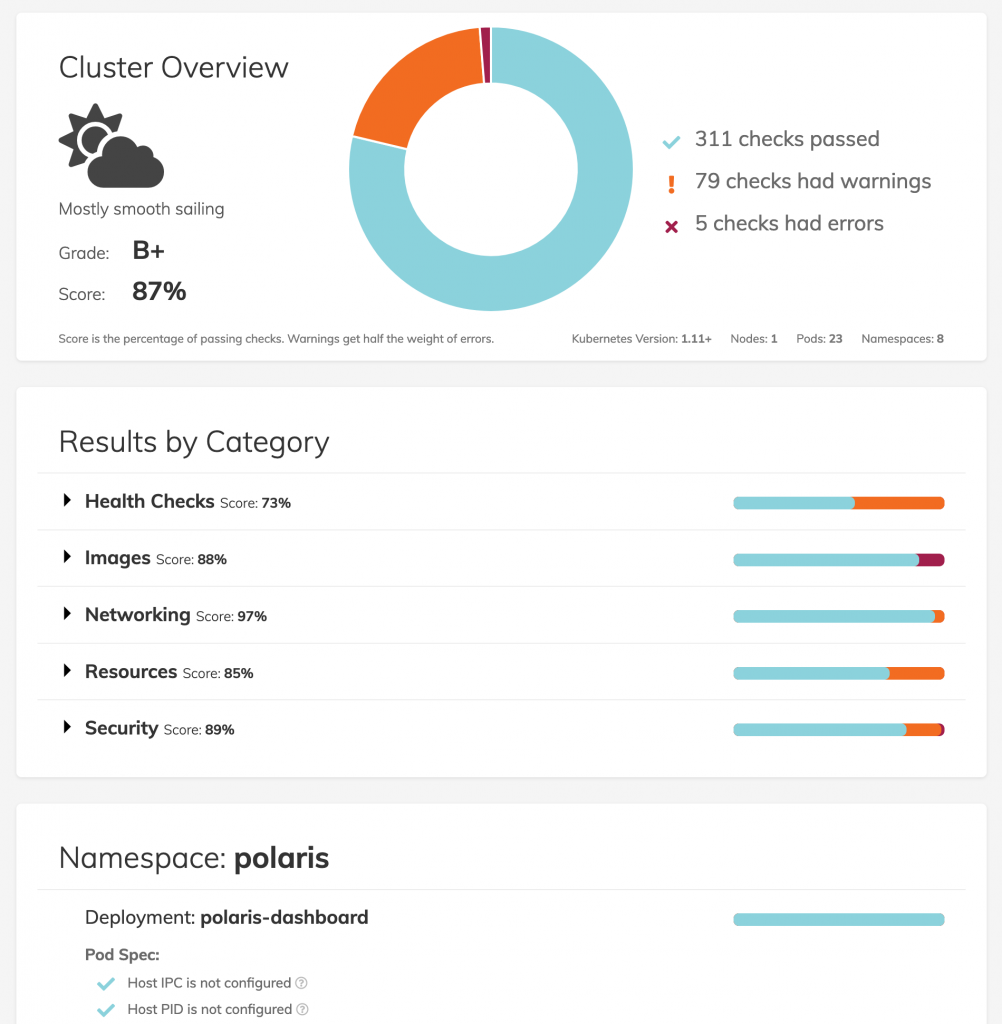

Fairwinds Polaris consists of two main components: A dashboard provides ratings for clusters that are currently deployed, while a webhook kicks off a series of checklists that identify configuration errors. Based on the severity of those ratings, it is up to individual DevOps teams to decide if they want Polaris to prevent a Kubernetes cluster from being deployed in a production environment.

Fairwinds CEO Bill Ledingham says Polaris is designed to help automate the manual processes organizations rely on at the back-end of continuous integration/continuous deployment (CI/CD) to spin up a Kubernetes cluster to run their containerized application.

Fairwinds expects many customers will ask the managed service provider (MSP) to manage Polaris alongside instances of Kubernetes, he adds. At the same time, however, it’s just as likely rival MSPs will also embrace Polaris to extend the range of Kubernetes services they offer.

Although Kubernetes has been adopted within many enterprise organizations, the percentage of workloads running on the platform remains comparatively thin. A major reason is that the level of expertise required to spin up and then manage a Kubernetes cluster is relatively high. Open source projects such as Polaris should go a long way toward providing a mechanism for distributing best practices for spinning up Kubernetes clusters. Over time, IT organizations can edit those best practices as they see fit, but at the very least Polaris eliminates the need for IT teams to manual document their own set of configuration documentation, says Ledingham.

Despite the ability to run multiple applications on a Kubernetes cluster, most organizations still are running only one application per cluster, he adds. That has as much to do with their relative application development expertise as it does the internal politics of an organization. Most companies are made up of multiple divisions that don’t always share resources. Of course, that tendency to not want to share also sets the stage for Kubernetes sprawl issues further down the road, he says.

Naturally, there are a host of Kubernetes management issues that IT organizations will have to master. However, none of those issues will be addressed if IT teams can’t get past provisioning Kubernetes at scale. In fact, the rate at which containerized applications are likely to be deployed in any enterprise IT environment now has everything to do with the simplicity of provisioning the Kubernetes clusters they depend on.