Docker ecosystem: State of the union

Docker has spread like a forest fire a continuous deliverynd movement’s thirst for containerization has been nothing short of remarkable. A community of organizations has developed around Docker by approaching add-on applications for their organization thus creating a complete system. Combination of Docker, Cloud Infrastructure, Continuous Integration and delivery practices helps in creating an extremely automated and well-organized way to get new applications and features to market.

Docker has moved out from just being an enterprise set up to an ecosystem that speed up the delivery of distributed applications. Docker’s ecosystem make sure that companies building monitoring tools integrate with the Docker platform to provide users and organizations with the supreme degree of availability, visibility and performance for distributed applications.

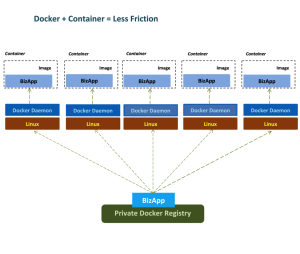

Docker + Containerization

Docker is the most common containerization software in use today which makes use of Linux Kernel Containerization technology to create, secure and isolate independent containers to run within a single Linux instance. At the same time other containerizing systems exist, Docker make container creation and management simple and integrates with other open source projects. Due to this technology, developers have become quite assure that their applications will run on any Linux machine deprived of worrying about different customized settings.

Containers are expected to coexist with other virtualization approaches and pre-existing IT methods. They are especially powerful where application startup time is crucial. The container developer’s ecosystem is maturing quickly that helps in scaling up the deployment ecosystem naturally. Docker acting as a cherry on top adds imaging features and greatly simplifies container management.

Containers are expected to coexist with other virtualization approaches and pre-existing IT methods. They are especially powerful where application startup time is crucial. The container developer’s ecosystem is maturing quickly that helps in scaling up the deployment ecosystem naturally. Docker acting as a cherry on top adds imaging features and greatly simplifies container management.

Docker – Its Significance

- Docker makes use of lightweight resources utilization instead of virtualizing an entire operating system. It has gained its popularity due to the fact that there are least dependency issues.

- Docker containers are run almost everywhere. All the dependencies for a containerized application are bundled inside of the container thus allowing it to run on any Docker host. It can be deployed on containers, desktops, physical servers, virtual machines, into data centers, and up to public and private clouds.

- Docker helps in providing a container for managing software workloads on shared infrastructure, while keeping each container isolated from one another.

- Containers authorize developers to design an application with all the essential parts and provide it as a package.

- Docker interfaces are standardized and the interactions are predictable as host does not care about what is running inside of the container and container on the hand does not care about which host it is running on.

Docker advanced native networking configuration

Networking plays a vital role when constructing distributed systems to serve Docker containers. Docker itself looks after networking fundamentals that are essential for building up the communication in container-to-container and container-to-host. New virtual bridge interface is configured whenever Docker process is referred and is named as dockerθ on the host system. This interface helps Docker in allocating a virtual subnet for use among the containers.

Here bridge function act as the main point of interface that allows networking within a container and on the host. Docker automatically configure iptables rules to carry forward and configure NAT masquerading for traffic originating on dockerθ which is destined for the outside world.

Networking Tools

Docker being one of the hottest technologies in the system administration space has never been easy for many professionals as they are not able to get most from this technology. Docker ecosystem has produced a variety of projects that focus on expanding the networking functionality availability to operators and developers. Below is the list of additional capabilities that are enabled through additional tools:

- Cover networking to make streamline and bring together the address space across multiple hosts.

- To provide secure communication between various components, tools make use of virtual private networks.

- Assigning per-host or per-application subnetting

- Additional tools set-up macvlan interface for communication purposes

- For you container these tools help in configuring customer MAC addresses, gateway, etc.

What Are Some Common Projects for Improving Docker Networking?

- flannel: Overlay network providing each host with a separate subnet. This is a condition necessary for Google’s kubernetes orchestration tool to function, but it is useful in other situations.

- weave: Overlay network portraying all containers on a single network. This simplifies application routing as it gives the appearance of every container being plugged into a single network switch.

- pipework: Advanced networking toolkit for arbitrarily advanced networking configurations.

Organizations Tap Docker to Speed Application Delivery

Docker has become heart of Developers as it is increasingly being utilized in production environments. Development team use Docker to simplify, speed up IT and improve their Continuous Integration (CI) process by dockerizing their application from development to production.

Docker allows developers treat infrastructure as a code. Their applications can be easily copied around the infrastructure from dev to test and test to production from a Docker container. Addition of third-party management tools as explained above, Docker infrastructure can be described as a YAML file for devs.

Docker is not all that for distributed applications

In order to help developers in creating distributed applications, Docker as a whole is sold as the platform. Though there are certain areas in which it has failed to leave impact but Docker ecosystem has grown up to connect the distributed application dots in areas like:

- Networking: For a distributed application it is essential to talk to other containers somewhere else.

- Motion: If a container is stateless it can be easily destroyed but if the container is stateful it need to be moved around the hosts.

- Orchestration: Management system is required in order to make containers work together.

- Extension Management: It helps in adding other optional extras to Docker.

- Service discovery: A new containerized application needs an authoritative source to tell it where to find services.

Final Thoughts

By now almost everyone is familiar with the general function of most of the software associated with the Docker ecosystem. The immense growth of Docker and Linux containers show fast pace growth thus expect to see an array of new developments over the coming years.

The Docker ecosystem being young it has filled up many white spaces in a small time. Providing internal and external services through containerized components is a very powerful model, but networking considerations become a priority. While Docker provides some of this functionality natively through the configuration of virtual interfaces, subnetting, iptables and NAT table management, other projects have been created to provide more advanced configurations.

Sudhi, a technology entrepreneur, brings 19+ years in software, cloud technologies, IT operations and management. Have led several global teams in HP, Sun/Oracle, SeeBeyond. He has built highly scalable and highly available products, systems management, monitoring and integrated SaaS and on-premise applications. Currently as part of Xervmon offering, he is building https://www.analyzr.io https://www.xdock.io @Xervmon He is a trusted advisor and consults with companies of all sizes to establish DevOps practices, implement docker based CI/CD or AWS deployments in a cost effective scale. Connect with Sudhi on twitter

Sudhi, a technology entrepreneur, brings 19+ years in software, cloud technologies, IT operations and management. Have led several global teams in HP, Sun/Oracle, SeeBeyond. He has built highly scalable and highly available products, systems management, monitoring and integrated SaaS and on-premise applications. Currently as part of Xervmon offering, he is building https://www.analyzr.io https://www.xdock.io @Xervmon He is a trusted advisor and consults with companies of all sizes to establish DevOps practices, implement docker based CI/CD or AWS deployments in a cost effective scale. Connect with Sudhi on twitter

It’s early in the adoption of container based architectures, but it’s notable that Docker is the one thing supported by Microsoft, Red Hat, Google, and AWS. Developers and DevOps will increasingly view the Container and surrounding services as the “Operating Platform” and the underlying OS will be legacy infrastructure.