Kubermatic Kubernetes Platform Supports OPA Integration

Kubernetes’ drawbacks — the enormous advantages it offers, aside— include missing universal security management features, both for clusters and the containers it was designed to orchestrate. Historically, completely different APIs, tools and user interfaces (UIs) were required for authorization and policy control for containers, for example, further compounding the complexity of Kubernetes for container orchestration. At the same time, Kubernetes operators also have lacked uniformity within their platforms for policy management.

With the Open Policy Agent (OPA), for which the Cloud Native Computing (CNCF) recently granted “graduation” status, DevOps teams can rely on a single API and framework to set all policies for applications in Kubernetes and microservices environments. The production version of OPA was especially well-received following the recent deprecation of pod security policy, which was used to set policy for pods.

As a potential solution, Kubermatic Kubernetes Platform (KKP), a multi-cluster and multi-cloud Kubernetes platform, now integrates OPA’s policy-setting capability on Kubernetes with its 2.16 release. The release also features machine learning (ML) frameworks, new admin controls and other capabilities also covered in this article.

Kubermatic opted to integrate OPA into its Kubernetes platform to offer DevOps teams improved policy control over the entire cloud-native stack including microservices, Kubernetes, CI/CD pipelines and API gateways, Sascha Haase, vice president for edge, Kubermatic says.

“We clearly felt a gap regarding authorization, governance and policies of Kubernetes infrastructure, in particular since the deprecation of pod security policy was announced,” Haase says.

As mentioned above, KKP users, as well as Kubernetes users in general, previously relied on pod security policies to control “security sensitive aspects” of the pod specification, Haase says. At the same time, pod security policies did have limitations. Haase attributes Kubernetes Auth Sig’s decision to deprecate the pod security policy in the upcoming Kubernetes 1.21 version to “major shortcomings in its design.”

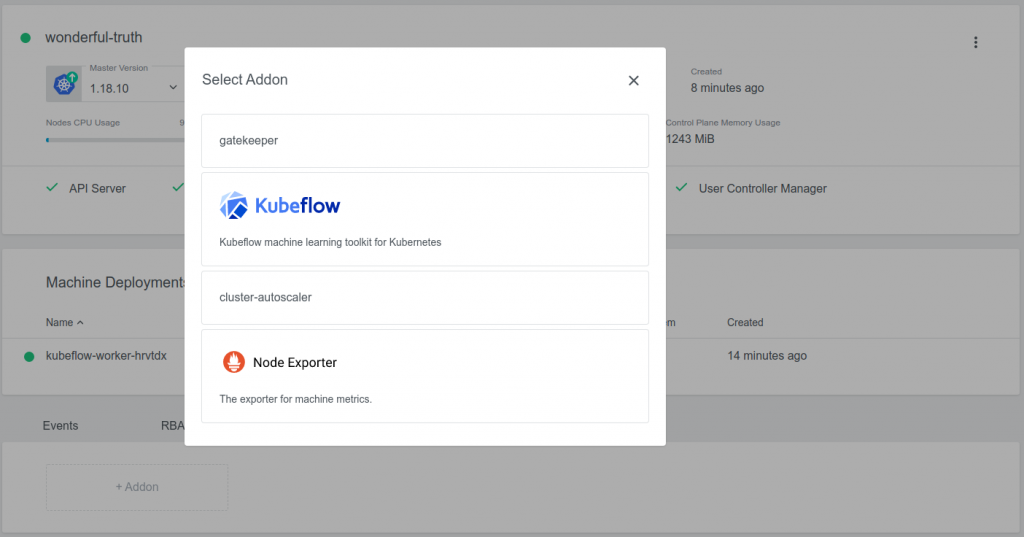

Haase says DevOps teams using KKP 2.16 will especially appreciate how OPA, as an open source and general-purpose policy engine, will be particularly helpful in unifying policy enforcement across the entire stack. KKP integrates with OPA using Gatekeeper, which is an OPA Kubernetes-native policy engine. The integration is specific for each user cluster, meaning that it is activated by a flag in the cluster spec. By setting this flag to true, Kubermatic automatically deploys the needed Gatekeeper components to the control plane, as well as to the user cluster, Haase explains.

Other features KKP 2.16 offers include:

Dynamic Data Centers and Other Enhanced Admin Configurations

Platform administrators are able to “dynamically” configure data centers they select and “can more easily add new ones,” Haase says.

“In order to adjust at any given point in time, our users are now able to dynamically configure the data centers they want to use, create presets to match the role-based access control and efficiently manage the resource consumption,” Haase says. “Dynamic configuration of data centers thus allows the administrators to enable new regions on the fly.”

Preset management also allows admins to configure development and production environments that “their users just choose when creating a new Kubernetes cluster,” Haase says. “It has every configuration attached, and they can configure consumption centrally and adjust in real-time if needed,” Haase says.

Machine Learning with KubeFlow Addon (Preview Feature)

The Kubeflow Addon allows for automated installation of Kubeflow, the ML toolkit for Kubernetes, with Kubeflow authentication integrated with KKP.

“With the Kubeflow Addon installed, users can easily deploy Kubeflow into a user cluster,” Haase says. “The KKP UI will provide several options that can be used to customize the Kubeflow installation.”

ARM support

As Haase notes, ARM architectures for software stacks are becoming more popular in data centers, while hyperscalers “push ARM-based approaches.”

“Edge scenarios are using ARM as well, as they are advantageous for the power-to-performance ratio, such as for most mobile phones, which use ARM-powered CPUs and GPUs,” Haase says. “In our ongoing effort to support the broadest possible infrastructure, we enabled ARM support and made it work as [well] as x86 supported machines,” Haase says. “With our integration, it is now possible to manage ARM-based clusters without any effort.”